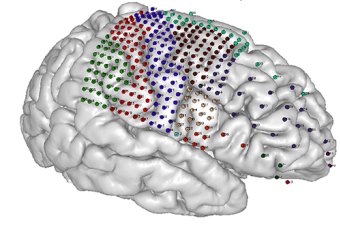

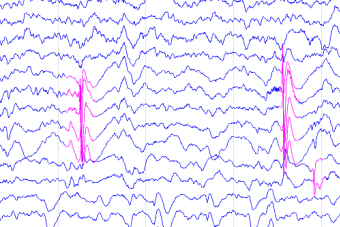

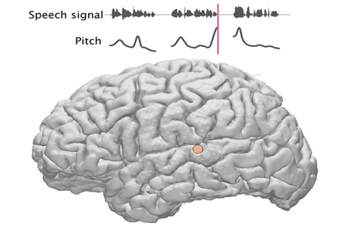

The Hamilton Lab is part of the NeuroComm Labs at UT Austin's Department of Speech, Language, and Hearing Sciences in the Moody College of Communication. We are also jointly affiliated with the Department of Neurology at UT Austin's Dell Medical School. Our research aims to determine how natural sounds including speech are represented by the human brain during speech perception and production, and how these representations change during development. We study how the human brain processes speech sounds using intracranial electrocorticography (ECoG) recordings from patients with intractable epilepsy who are undergoing surgery to treat their epilepsy. We also study how the brain can track speech in clean and noisy environments during perception and production using noninvasive EEG and behavioral studies in healthy participants. We use a combination of electrophysiology, behavior, neuroimaging, and computational modeling to address these questions.

We would not be able to do this work without the generous help of clinicians and our patient volunteers, who participate in listening tasks during their hospital stay. You can read more about our research on the Research page.

Funding Sources:

We are grateful for funding from the following organizations:

- The Texas Speech Language Hearing Foundation

- The National Institutes of Health - National Institute on Deafness and Other Communication Disorders

- Coleman Fung Foundation

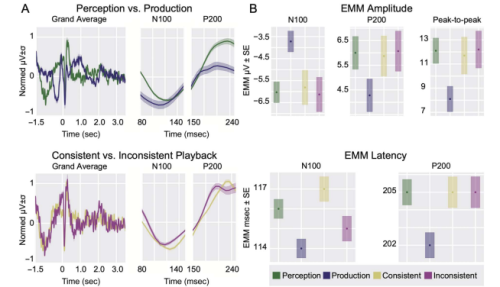

Speaker-Induced Supression in EEG during a Naturalistic Reading and Listening Task

"Speaker-induced Suppression in EEG during a Naturalistic Reading and Listening Task" by Kurteff et al. is now out in the Journal of Cognitive Neuroscience. J Cogn Neurosci 2023; doi: https://doi.org/10.1162/jocn_a_02037

Lab News and Updates

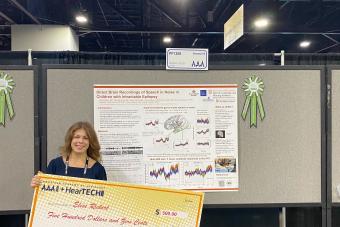

Elise recieves the Jerger Award for Excellence in Research

Elise participated in a poster session at this year's American Academey of Audiology conference in Atlanta, Georgia. She recieved the Jerger Award for Excellence in Research for her work.

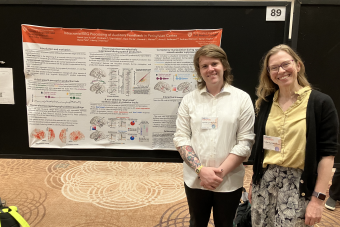

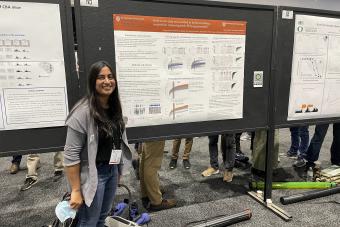

Lab goes to CNS 2024 in Toronto

Liberty and Garret attends Cognitive Neuroscience Society in Toronto this year. Garret participated in a poster session and Liberty gave a talk.

Audiology Capstone Day

We had two students in the lab, Elise Rickert and Lydia Su, present their work at Audiology Capstone Day!

Hamilton Lab takes on UTCARE 2024

We had four lab members share posters at UTCare day 2024: Arpan Patel, Garret Kurteff, Elise Rickert, and Rajvi Agravat.

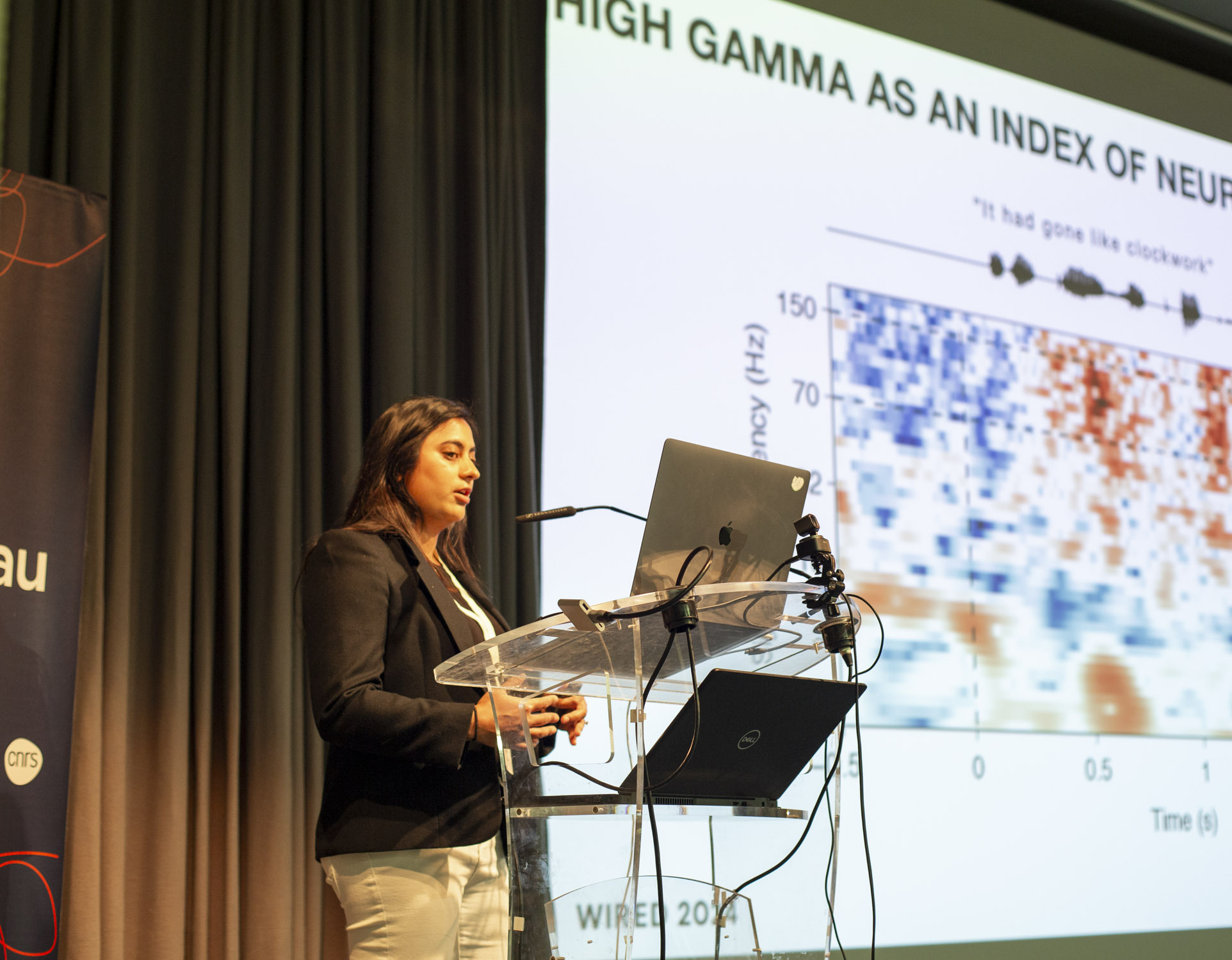

Maansi Goes to WIRED 2024 in Paris, France

Maansi presented her work on sEEG recordings using Movie Trailers and led a training on sEEG preprocessing using mne.

Congratulations to Garret and Rajvi!

GL Kurteff and Rajvi Agravat were recognized at the TSHA breakfast for receiving awards from the Texas Speech Language Hearing Foundation. GLK received the Sandy Friel-Patti Research in Language International Travel award, and Rajvi received the Elisabeth Wiig Doctoral Student Research award. Rajvi's award will be put towards recruiting healthy subjects for an EEG experiment looking at whether game play and exercise can improve auditory attention.

Congrats Gabby!

Gabby, our Research Coordinator at TCH Houston has gotten into med school! She will be part of the next class of med students at Texas College of Osteopathic Medicine in Fort Worth.

FEB 2024 - ARO

Liberty presented at the Association for Research in Otolaryngology Young Investigator Symposium. Her talk was about using intracranial recordings to understand acoustic and phonological processing in children and adolescents using iEEG.

New Publication Alert

Liberty has published an article for the Oxford Research Encyclopedia of Neuroscience entitled "Neural Processing of Speech Using Intracranial Electroencephalography: Sound Representations in the Auditory Cortex".

Congrats Alyssa!

In December 2023 Alyssa graduated with her MEd in Talent Development from Texas State University. She pursued her degree while working full time as an RA for the lab. She has since been promoted to Research Program Coordinator for the lab.

Garret Kurteff is a William Orr Dingwall Dissertation Fellow

Garret Lynn Kurteff has received the William Orr Dingwall Dissertation Fellowship in the Cognitive, Clinical, and Neural Foundations of Language, which is a competitive $40,000 fellowship for doctoral candidates studying the neuroscience of language.

Check out this paper!

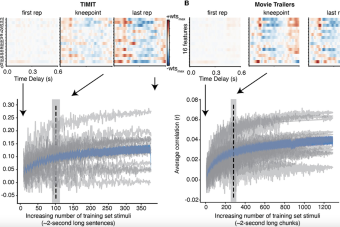

Maansi Desai, Alyssa Field, and Dr. Liberty Hamilton recently published a paper on data size considerations for EEG data on natural stimuli.

Maansi Desai's PhD Defense

Maansi Desai held her defense on 21 June 2023. She now continues her pursuits in the lab as a postdoctoral fellow.

Alyssa Field is awarded the 2023 Moody College Impact Staff Award

Congratulations to Alyssa Field, our Research Associate, for receiving the Moody College Impact Staff Award. The Moody Impact Staff Award recognizes two staff members who go above and beyond their regular duties to make a significant impact at Moody.

May 2023: Malinda Mullet is a Recipient of the Sertoma Scholarship

Malinda Mullet is selected to receive the Sertoma Scholarship for excellence in audiology and record of service to the community.

Erica recieves a TSHA award January 2023

Erica McVey receives a Texas Speech-Language Hearing Association award for an EEG project on speech in noise processing in ASL interpreters

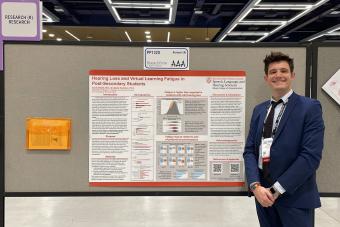

Jacob Cheek goes to American Academy of Audiology

April 2023 - AuD student Jacob Cheek presents his work on hearing loss and virtual learning fatigue at the American Academy of Audiology meeting in Seattle, WA.

Garret receives TSHA award January 2023

Garret Kurteff receives a Texas Speech-Language Hearing Association award for an EEG project on the role of audio motor feedback in apraxia of speech

APAN & SFN 2022

Maansi will be presenting at APAN/SfN

Dr. Liberty Hamilton is awarded the Ellen A. Wartella Distinguished Research Award

Dr. Liberty Hamilton is awarded the Ellen A. Wartella Distinguished Research Award by the Moody College of Communication for her article “Parallel and distributed encoding of speech across human auditory cortex”

October 2022: Dr. Liberty Hamilton is a Kavli Fellow

Dr.Liberty Hamilton is selected as a Kavli Fellow by the National Academy of Sciences. Dr. Hamilton participated in the Israeli-American Frontiers of Science Symposium held in Irvine, CA October 19-21.

Walk to End Epilepsy 2022

Some of our lab members attended Walk to End Epilepsy ATX this October 2022

NIH R01 Supplement

Elise has received funding from NIH R01 supplement

OVPR special research grant recipient

Jacob Cheek receives an OVPR special research grant to study virtual learning fatigue in students with hearing loss

Cold Spring Harbor Neurobiology of Language Attendance

Garret attended and presented at Cold Spring Harbor Neurobiology of Language conference this summer 2022.

Lab Goes to ARO 2022!

Garret Kurteff is presenting a poster on Stereo EEG Mapping of Sensorimotor Responses to Self-Generated Speech. Dr. Hamilton and Maansi Desai will be giving talks during Symposium 34: Insights From Naturalistic Stimuli for Models of Human Speech Perception

Check out this feature!

Dr. Hamilton is a part of this new article published in Quanta Magazine about work on speech processing both in the Hamilton Lab as well as the Chang Lab at UCSF.

Check out Dr. Liberty Hamilton's feature in this Dell Med Report

Check out this report on how the Hamilton lab is part of an interdisciplinary approach to a study aimed at shielding critical brain functions from surgery.

August 2021: The Lab welcomes 2 new members!

The Hamilton Lab welcomes Malinda Mullet and Elise Rickert. Malinda is interested in speech and language development outcomes and is pursuing her Au.D. Elise is a first-year Au.D student who is interested in the neuroplasticity of the auditory cortex and speech perception.

April 12-15, 2021 - TSHA

Garret, Maansi, and Liberty will present their research on understanding naturalistic speech perception and production using scalp EEG at the Texas Speech-Language-Hearing Annual Convention

April 2021 - Front Cover of Epilepsia!

We are excited to announce that our paper with Dr. Jon Kleen and collaborators at UCSF on visualization of seizure activity in epilepsy made the cover of April's issue of Epilepsia.

March 22, 2021 - Welcome Donise Tran!

The lab welcomes Donise Tran as a new undergraduate research assistant. Donise is currently pursuing her undergraduate degrees in both Neuroscience and Linguistics alongside a minor in American Sign Language. Donise will be assisting Maansi on some new projects in the lab.

March 2021 - Maansi Desai receives research grant from TSHA

Congratulations to Maansi Desai, who recently received a research grant from the Texas Speech-Language-Hearing Foundation to investigate subcortical speech in noise processing in hidden hearing loss.

February 8, 2021 - Welcome Alyssa Field!

The lab welcomes Alyssa Field as a new postbac research assistant. Alyssa did her undergraduate studies at UT Austin and graduated with her BA in Linguistics, a minor in Italian, and certificate in Digital Humanities. Alyssa will be supporting our collaborative efforts acquiring intracranial data at Dell Children's and Texas Children's Hospital.

January 2021 - Jacob Cheek joins our research group as an AuD student

Jacob has joined the lab to do independent research on Zoom fatigue and its effects on education for Deaf students. Jacob has also recently joined the UT Austin AuD program as a clinical doctoral student. Welcome back, Jacob!

January 25, 2021 - Welcome, Sahar Hashemgeloogerdi!

The lab welcomes Dr. Sahar Hashemgeloogerdi, a new postdoctoral scholar who joins us from Mark Bocko's laboratory at the University of Rochester. Sahar brings her experience in acoustics and speech enhancement in noisy, reverberant environments to the lab.

December 2020 - Congratulations to Garret Kurteff for completing his Master's thesis!

Garret Kurteff has completed his Master's Thesis in Speech, Language, and Hearing Sciences. It is entitled "Modulation of Neural Responses to Naturalistic Speech Production and Perception" and addresses methods for understanding continuous speech production using EEG, how brain responses differ during perception and production, and how this is modulation by expectation.

December 2020 - The lab is awarded R01 funding!

The lab was awarded R01 funding from the National Institutes of Health and the National Institute on Deafness and Other Communication Disorders. The title of the grant is "Electrophysiological Approaches to Understanding Functional Organization of Speech in the Brain" and will involve our team here at UT Austin and Dell Children's Hospital and Baylor College of Medicine in Houston, TX.

October 2020 - Liberty presents at the Sociedad Argentina de Investigación en Neurociencias

Liberty presented a talk about the lab's ongoing research at a special symposium for the Argentine Society for Research in Neuroscience (SAN) 2020. Her talk was part of the symposium "Representation of language networks. A talk between the Brain and Artificial Intelligence." Her talk was entitled "Understanding naturalistic speech processing using invasive and noninvasive electrophysiology."

October 2020 - The lab receives NIH funding for our research on speech and language in the brain

We received an R01 grant from the National Institutes of Health and the National Institute on Deafness and Other Communication Disorders to understand how the brain processes speech and language during natural perception and production, and how this changes across development. This grant is a collaborative effort with researchers and clinicians at Dell Children's Medical Center in Austin, TX and Texas Children's Hospital in Houston, TX.

October 2020 - The lab presents at APAN 2020

Maansi Desai presented her work at the Advances and Perspectives in Auditory Neuroscience (APAN) 2020 virtual conference. Her presentation was entitled "Training and evaluation of EEG encoding models for naturalistic and controlled stimuli".

October 2020 - The lab presents research at the Society for Neurobiology of Language

Garret Kurteff and Maansi Desai presented their work at the Society for Neurobiology of Language virtual meeting. Garret's presentation was entitled "Methods for Investigating Continuous Speech Production and Perception with EEG." Maansi's presentation was Modeling EEG responses to audiovisual features in quiet and noisy listening situations using naturalistic stimuli".

September 2020 - The lab welcomes Brittany and Tasha!

The lab welcomes Brittany Hoang and Tasha Anslyn. Brittany is undertaking an independent study with Dr. Hamilton on speech and music processing in the brain. Tasha is participating in the Neuroscience Undergraduate Reading Program (NURP) and assisting Garret Kurteff with research on errors during naturalistic speech production as measured with EEG.

May 2020 - Congratulations to Maansi Desai on completing her Master's Thesis!

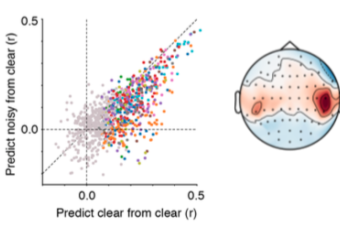

Maansi Desai has completed her Master's Thesis in Speech, Language, and Hearing Sciences. It is entitled "Neural Speech Tracking in Quiet and Noisy Listening Environments Using Naturalistic Stimuli", and addresses how the brain responds to acoustic and phonological information in both quiet, clear speech, and more naturalistic, noisy audiovisual speech.

March 2020 - "Minds at Work"

Maansi Desai was interviewed by Moody College Marketing & Communication expert Natalie England about her article in Frontiers for Young Minds.

February 2020 - Brain Stimulation to Understand Music and Language

Our paper in Frontiers for Young Minds is out! This paper explains how cortical stimulation mapping is used to understand the neural representations of speech and language in musicians, and was written for and reviewed by kids! The paper was led by PhD student Maansi Desai in collaboration with undergraduate Rachel Sorrells and our UCSF collaborators Matthew Leonard and Edward Chang.

Feb 2020 - Speech in natural noisy situations coming to OHBM!

MA-PhD student Maansi Desai and current and former undergraduates Jade Holder, Cassandra Villarreal, and Natalie Clark will have their work on using EEG to understand the processing of speech in natural, noisy situations presented at the Organization for Human Brain Mapping Conference in Montreal this June, 2020.

January 2020 - Welcome Jacob and Nick!

The lab welcomes Jacob Cheek and Nick Arreguy. Jacob continues with independent study in the lab after participating in the Moody Intellectual Entrepreneurship program. Jacob is interested in speech in noise perception and word recognition in people with moderate to severe hearing loss. Nick joins as an independent study student and is learning to collect EEG data to help with a collaboration with the Aphasia Research and Treatment Lab.

October 2019 - The Lab presents at APAN and SfN

Research Assistant Ian Griffith and Liberty Hamilton presented work at the Advances and Perspectives in Auditory Neuroscience Meeting (APAN) as well as the Society for Neuroscience Meeting.

August 2019 - Congratulations to Mary Lowery!

The lab says farewell and good luck to Mary Lowery, who is pursuing her clinical fellowship (CF) in Speech Language Pathology!

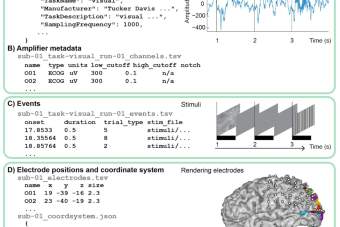

June 25, 2019 - New paper out in Scientific Data

Our new paper on standards for sharing intracranial EEG data has moved from preprint to journal, and is now out in Scientific Data! We are happy to be involved in a large consortium of iEEG and ECoG researchers who are interested in better standards for data sharing. Thanks to Chris Holdgraf and Dora Hermes for leading this project.

June 2019 - Welcome Amanda, Nicole, Paranjaya, and Natalie!

Nicole Currens, Amanda Martinez, and Paranjaya Pokharel will join the lab, helping Garret Kurteff with a speech production and perception EEG project. Natalie Miller joins the lab to assist Maansi Desai with an EEG project on natural speech perception and influences of background noise. Welcome!

May 2019 - Congratulations to Mary, Natalie, and Cassie!

Congrats to Mary Lowery, who graduated May 2019 with her Master's degree in Speech Language Pathology, and to Natalie Clark and Cassandra Villarreal, who graduated with their bachelor's degrees in Communication Sciences and Disorders! Natalie and Cassie are going on to graduate masters programs in Speech Language Pathology. Mary will be working in the lab over the summer and then working on her clinical fellowship (CF). We wish them all the best!

March 2019 - The Lab wins awards from the Texas Speech-Language-Hearing Foundation

Congratulations to 1st year MA-PhD student Maansi Desai, who received the Elizabeth Wiig Doctoral Student Research Fund, and to Liberty Hamilton, who received the Tina E. Bangs Research Endowment Fund. The awards were presented at the TSH Foundation breakfast in Fort Worth, Texas on March 1st, 2019.

July 2018 - New review on natural stimuli!

We have a new review out in Language, Cognition, and Neuroscience -- Hamilton & Huth. "The revolution will not be controlled: natural stimuli in speech neuroscience".

February 2019 - Welcome, Stephanie!

Stephanie Shields is a first year PhD student in the Institute for Neuroscience and has started a 10-week research rotation with our group. Welcome, Stephanie!

January 2019 - Welcome, Ian and Rachel!

The lab welcomes Ian Griffith, who joins us as a research associate, and Rachel Sorrells, who joins as an undergraduate in neuroscience. Ian brings experience in cognitive neuroscience, EEG, and computational neuroscience and will work on a project related to invariance in the auditory system. Rachel will be working on a project related to natural sound perception.

December 2018 - Making intracranial EEG data sharing easier!

We recently contributed to an excellent preprint by Christopher Holdgraf (Berkeley Institute for Data Science) and Dora Hermes (Brain Center Rudolf Magnus, UMC Utrecht) on improving reuse and external sharing of intracranial EEG data.

September 2018 - Welcome, Maansi and Garret!

Maansi Desai and Garret Kurteff join the lab as MA-PhD students in Speech, Language, and Hearing Sciences.

Welcome Cassandra, Jade, Natalie, and Noora!

The lab welcomes CSD students Cassandra Villarreal, Jade Holder, Natalie Clark, and CSD graduate Noora Raad to our group. They will be working on stimulus transcription for natural stimuli as well as some data collection!

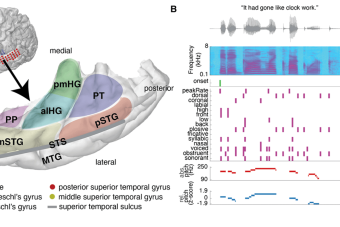

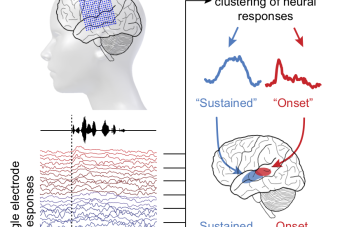

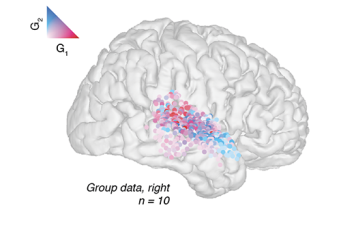

May 2018 - New paper in Current Biology!

Our paper "A spatial map of onset and sustained responses to speech in the human superior temporal gyrus" is out in Current Biology! We used ECoG and computational methods to describe a new spatial map in STG.

April 2018 - UT Care Research Day

At UT Care Research Day here at UT Austin, we presented research from the lab showing onset and sustained responses to speech and how they might be used in a speech decoder. Mary Lowery's preliminary results on speech and music were also shared with the group.

February 2018 - ARO Conference

Liberty gave a talk at the Association for Research in Otolaryngology (ARO) conference in San Diego, CA, as part of the symposium on "Linking theoretical and experimental approaches to understand auditory cortical processing". (Pictured: San Diego seagull giving suggestions for future speech production tasks).

October 31, 2017

Our new paper with colleagues from the University of California San Francisco on localization, labeling, and warping of electrodes in electrocorticography (ECoG) is now out! The paper includes open source python software for electrode localization, brain plotting, and more.

October 10, 2017

A paper with our collaborators from the University of California, San Francisco Department of Neurological Surgery and Department of Neurology is now out in the journal Neurosurgery! Read how Maxime Baud and colleagues were able to apply unsupervised machine learning techniques to detecting seizure activity in intracranial EEG.

September 14, 2017

Dr. Hamilton presents her research at the 6th International Conference on Auditory Cortex in Banff, Canada on the functional organization of the human speech cortex using intracranial recordings.

August 24, 2017

Write-up from NPR on Tang, Hamilton, & Chang Science 2017. "Really? Really. How Our Brains Figure Out What Words Mean Based On How They're Said"

August 24, 2017

Write-up from Wired on Tang, Hamilton, & Chang Science 2017. "Scientists found the neurons that respond to uptalk"